Market Insights

Why SIBOS matters to the AI market.

SIBOS matters because AI costs don’t scale the way most teams expect. As usage grows, token spend becomes a variable operating cost that compounds across every workflow, every user, and every automated process. What starts as a manageable API bill can quickly become a margin problem, especially when the same prompts, repeated context, and unnecessary reasoning are being sent to large models thousands of times a day. SIBOS is built to solve that problem at the source: before waste reaches the LLM.

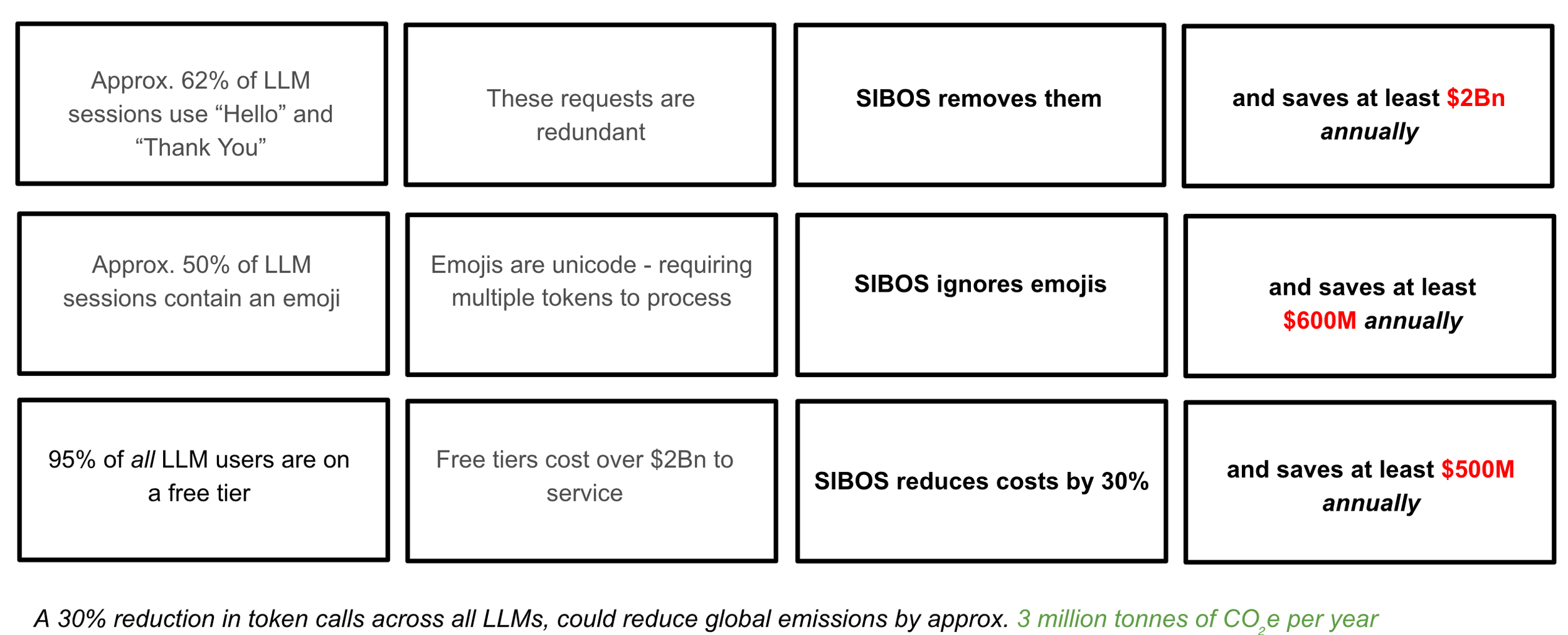

At its core, SIBOS reduces token cost by removing low-value tokens and redundant inference from AI interactions. That means less prompt bloat, less repeated context, fewer overlong outputs, and smarter routing of requests to the right model for the task. Many AI systems send simple requests to premium models by default, even when a lower-cost model could deliver the same outcome. SIBOS introduces policy-controlled routing and fallback logic so businesses can preserve high-cost model usage for high-value tasks, while automatically shifting simpler requests to lower-cost options, without compromising quality.

This has a direct commercial impact. Lower token usage reduces inference spend, improves cost per task, and creates more predictable unit economics. Instead of reacting to rising AI bills with pricing changes, top-ups, or usage caps, businesses can reduce the underlying cost driver itself. The result is better margins, more throughput per dollar, and a stronger foundation for scaling AI products and workflows sustainably.

There is also an environmental benefit. Every unnecessary token processed consumes compute and energy. At small scale this is easy to ignore, but at production volume the waste adds up quickly. By reducing redundant inference and minimizing token-heavy interactions, SIBOS helps organizations lower the compute intensity of their AI workloads. In practical terms, that means less energy burned to achieve the same business outcome, making AI operations not just cheaper, but more efficient and more responsible.

One of the most overlooked cost drivers in AI is how “small” prompt habits become expensive at scale. Repeated instructions, verbose formatting, duplicate context, excessive politeness, and unconstrained output lengths all increase token usage. Individually, these seem minor. Across millions of requests, they become a real line item. SIBOS addresses this with enforced efficiency guardrails: lean prompting, bounded outputs, context reuse, and model selection policies that are applied consistently across the stack. That’s how token reduction becomes a durable operating advantage, not a one-off prompt tweak.